After Disaster Strikes, Technology Planning Is Critical to Helping Hospitals Rebuild

In 2011, a mile-wide, EF5-rated tornado devastated Joplin, Mo., tearing straight through what was then known as St. John’s Regional Medical Center.

The hit to the hospital was catastrophic, causing several deaths and damaging the building to the point that it was declared a total loss. The organization couldn’t preserve any of its IT equipment, which had been battered with windblown debris, soaked in water and trapped inside the structurally compromised facility. And yet, the hospital suffered no data loss as a result of the twister.

“It was a horrible event, but from an IT perspective, the timing was fortuitous in that the hospital had just completed the migration to our data center,” says Scott Richert, vice president for enterprise infrastructure at Mercy Technology Services, which provides centralized IT for more than 40 Mercy hospitals across the Midwest, as well as commercial hospital customers.

The hospital, which was rebuilt and opened in 2015 as Mercy Hospital Joplin, had joined the Mercy system only a couple of years before the tornado struck. Mercy had already replaced the hospital’s network and centralized its IT infrastructure before the event. But prior to joining Mercy, the hospital’s IT systems weren’t prepared to weather a major disaster.

“Their data assets were all local in the hospital,” Richert says. “They had no use of external services. Everything was in the basement. They also didn’t have a full-fledged disaster recovery plan. If this had happened six months before, it would have been a much bigger loss.”

In the immediate aftermath of the storm, Richert and other Mercy IT officials scrambled to support makeshift field operations in Joplin. But once the dust settled, the experience underscored the need for meticulous disaster planning and led to lessons that Richert and the organization have put into practice.

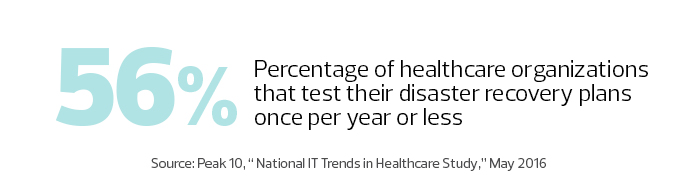

Natural disasters often uncover gaps in disaster recovery plans, helping the affected hospitals — and the entire sector — to fine-tune their processes.

Hospital Disaster Recovery Requires Twice the Work

Given the emergency response role that healthcare institutions play in the wake of natural disasters, Al Berman, president of the Disaster Recovery International Foundation and past president of Disaster Recovery Institute International, says it’s important for those plans to be as comprehensive as possible.

“Hospitals suffer probably twice as much from disasters as everybody else,” Berman says. “They have to deal with their own problems, and they have to deal with everyone else’s.”

After the tornado, Mercy officials set up a tent clinic on the edge of the hospital property. The organization borrowed a generator from a carpet cleaning company, then set up a Wi-Fi bridge between the site and another doctors’ office building on Mercy’s wide area network. Six trees blocked the sight line between the two locations, so workers cut them down.

Photo by Jay Fram.

“Even though we had a disaster recovery plan on paper, it was probably 75 percent improvisation,” Richert says. “A chain saw ended up being an integral part of the IT recovery effort.”

Of course, more traditional IT assets also played a significant role in the recovery efforts. A Cisco Voice over IP system, with redundancy to call servers in an offsite data center, proved to be critical, as did battery-powered Ergotron SV42 medical carts equipped with Dell small-form-factor PCs with integrated wireless.

From Makeshift to State-of-the-Art IT

When Hurricane Harvey hit the Houston metro area last summer, one hospital in the Harris Health System suffered from extensive water leaks, and another experienced a flooded basement. At one of the hospitals, officials had to move approximately 110 patient beds to other areas, requiring IT to reconfigure units to accommodate new workloads and provide clinicians with information about the location of patients.

Harris Health System also set up a clinic at the NRG Convention Center, complete with a full electronic health record system, exam rooms, a pharmacy and lab services.

“The challenge was dealing with the sheer volume of unplanned activity,” says Tim Tindle, executive vice president and CIO. “We relocated departments. We converted conference rooms into temporary offices, and PCs and telephones had to be installed.”

Compounding the workload, flooding prevented some staffers from immediately returning to work. One employee was on the job when his wife called, saying that their house was taking on several feet of water. Another employee had to be dispatched to help her and the couple’s child wade to an evacuation point. “It was frightening,” Tindle says.

When the time came to rebuild in Joplin, Mercy largely employed the practices it follows at other hospitals, such as laying redundant fiber. The 900,000-square-foot facility can withstand winds of up to 250 miles per hour, and leverages technology including an offsite data center, tablets, telemedicine and smart pumps. Still, Richert says, the experience validated some of what he already considered best practices.

“It reinforced our strategy around every data-bearing asset needing to be in the best data center we have,” he says. “When we bought this hospital, telemetry servers ran in network closets. When we build something new, we invest in the network and move all data-bearing assets out of the building.”

Mercy Technology Services also now offers other hospitals cloud-based disaster recovery and backup services supported by the Commvault Data Platform.

During Hurricane Katrina in 2005, flooding in the basement of the New Orleans VA Medical Center ruined the hospital’s IT infrastructure. The Veterans Health Administration had backed up its data at other locations across the country, and when displaced patients visited other VA facilities, clinicians were able to bring up their medical records within a matter of minutes.

Hospitals Seek Higher Ground for Rebuilt IT

Still, when the hospital was rebuilt in 2016, officials opted to locate IT resources on a higher floor to prevent water damage, one of a number of construction considerations meant to make the building more resilient in the face of natural disasters. The facility’s emergency ramp can also double as a boat dock during high flooding, and patient rooms can accommodate two beds, up from the usual one, if needed. Emergency generators are designed to carry the entire load of the hospital for up to five days.

“A lot of the features we have here are a result of Katrina, and improving the design from the beginning,” says Kristen Russell, chief of clinical engineering for the hospital.

In the decade-plus between Katrina and the ribbon-cutting on the new facility, a number of medical processes that previously relied on paper charts were networked, requiring the hospital to create new backup systems. “Right now, we have probably four dozen servers for 1,200 pieces of networked medical equipment,” Russell says. “In 2005, that was not the case.” The hospital now backs up its data from medical processes like telemetry to VA centers in Baton Rouge, La., and Little Rock, Ark.

Harris Health System was already revamping its data center backup practices when Harvey hit, and Tindle says the storm underscored the need for improvement. He watched nervously as the water level rose to around 3 feet on the street abutting the primary data center, and estimates that he would have had to initiate failover procedures had the water risen another 8 to 10 inches.

“We had to calculate the water-rise rate and project when the data center would start to take on water, and then fail it over before that — with all of our people working remotely,” he says.

When it Comes to Disaster Recovery, Plan for Adjustments

The situation could have been worse. The backup data center had only been in place for about a year. “I can’t imagine what my blood pressure would have been” if the secondary site hadn’t been ready, Tindle says. The organization is currently relocating its primary data center to a colocation facility and has invested in a tool to speed up failover time from four hours to just 20 minutes.

Tindle says in the future he’ll deploy staffers to the backup facility during an emergency. He also plans to change the makeup of the “ride-out” team so employees with families can prioritize getting loved ones out of harm’s way.

While no disaster recovery plan is perfect, Tindle says such initiatives put resources in place that allow organizations to adjust to challenges on the fly.

“The best-laid plans go out the window as soon as the specifics of any given disaster actually strike,” he says. “What saves you is, if you have the resources and the people, you have the ability to put a new plan in place and reconfigure your response. If you don’t, you’re going to be in deep trouble.”